Each transaction implements both a Put and Take method. This is an approach users of RDBMS's will be familiar with.Ī Flume transaction consists of either Puts or Takes, but not both, and either a commit or a rollback. The batch size is completely controlled by the client. Using large batch sizes, Flume can move data through a flow with no data loss and high throughput. The batch size can be used to control throughput. Flume is a transactional system and multiple events can be either Put or Taken in a single transaction. The Flume team decided on a different approach with FileChannel.

Many systems trade a small amount of data loss ( fsync from memory to disk every few seconds for example) for higher throughput. The underlying disks should be RAID, SAN, or similar. Users who use FileChannel because of its durability should take this into account when purchasing and configuring hardware. As such, it is only as reliable as the underlying disks. It's important to note that FileChannel does not do any replication of data itself. FileChannel guarantees that when a transaction is committed, no data will be lost due to a subsequent crash or loss of power. The goal of FileChannel is to provide a reliable high throughput channel. FileChannel was implemented in FLUME-1085. As such the development of a persistent Channel was desired. Memor圜hannel provides high throughput but loses data in the event of a crash or loss of power. Avro Source), the sources writing to Memor圜hannel, and HDFS Sink consuming the events, writing them to HDFS. A typical example would be a webserver writing events to a Source via RPC (e.g.

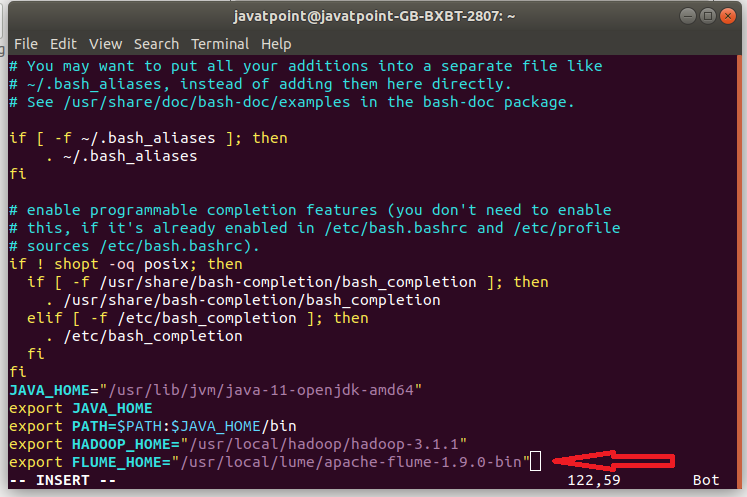

When using Flume, each flow has a Source, Channel, and Sink. It uses a simple extensible data model that allows for online analytic application.įileChannel is a persistent Flume channel that supports writing to multiple disks in parallel and encryption.

It has a simple and flexible architecture based on streaming data flows It is robust and fault tolerant with tunable reliability mechanisms and many failover and recovery mechanisms. Apache Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of log data. This blog post is about Apache Flume’s File Channel.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed